W3Schools Online Web HTML & JavaScript Tutorials

Tag: WebDev

Web development news and tutorials

OpenX.com

Open source adserver like Google admanager – Take control of your advertising

Koders.com

Open Source Code Search Engine

RobotReplay.com

The Next Generation of Web Analytics

RobotReplay.com

Google.com/AgencyToolkit

Google Agency Toolkit

Google.com/AdManager

Ad Manager banner ads manager for the web

Google.com/Analytics

Free web analytics for your website.

Turn data into decisions with unified measurement.

20 May 2026 @ 4:00 pm

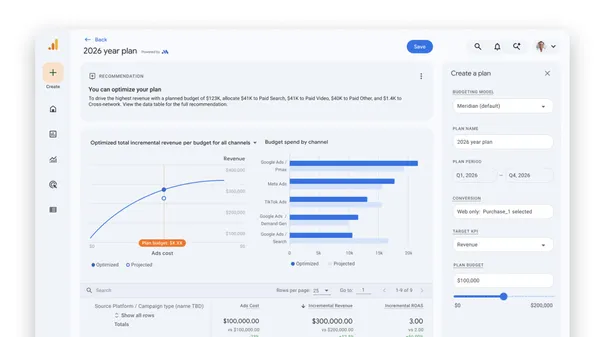

We’re bringing Meridian, our open-source MMM to Google Analytics and introducing Future Long-Term Conversions.

We’re bringing Meridian, our open-source MMM to Google Analytics and introducing Future Long-Term Conversions.Albertsons Media Collective brings retail signals to YouTube with Google’s Commerce Media Suite.

27 April 2026 @ 12:00 pm

Supercharge performance across the shopper journey by connecting Albertsons data with Google’s AI and scale.

Supercharge performance across the shopper journey by connecting Albertsons data with Google’s AI and scale.5 ways to collaborate with our agentic advisors

25 March 2026 @ 4:00 pm

Top tips and best practices for collaborating with Ads Advisor and Analytics Advisor

Top tips and best practices for collaborating with Ads Advisor and Analytics AdvisorGoogle’s Commerce Media Suite: Where retailer insights meet the power of YouTube

24 March 2026 @ 1:00 pm

Supercharge performance across the full customer journey by connecting Kroger’s shopper insights with Google’s AI and scale.

Supercharge performance across the full customer journey by connecting Kroger’s shopper insights with Google’s AI and scale.Google NewFront 2026: introducing the Gemini advantage

23 March 2026 @ 3:00 pm

An overview of how Gemini models bring unmatched value to Google Marketing Platform.

An overview of how Gemini models bring unmatched value to Google Marketing Platform.This March 23, we’ll be introducing the Gemini advantage in Google Marketing Platform.

26 February 2026 @ 4:00 pm

A look ahead at Google Newfront 2026, and how Gemini models bring more value for programmatic advertisers and biddable tools.

A look ahead at Google Newfront 2026, and how Gemini models bring more value for programmatic advertisers and biddable tools.The first episode of the Ads Decoded podcast dives into how marketers can leverage analytics and AI for better results.

28 January 2026 @ 2:00 pm

Welcome to the first full season of Ads Decoded, a podcast hosted by Ads Product Liaison Ginny Marvin to bring questions from advertisers straight to the people designin…

Welcome to the first full season of Ads Decoded, a podcast hosted by Ads Product Liaison Ginny Marvin to bring questions from advertisers straight to the people designin…New retailers are joining Google’s Commerce Media Suite.

20 January 2026 @ 2:00 pm

Best Buy and Shipt are now sharing commerce audiences with Google’s Commerce Media Suite.

Best Buy and Shipt are now sharing commerce audiences with Google’s Commerce Media Suite.New biddable capabilities for live sports with Display & Video 360

12 January 2026 @ 4:00 pm

A look at the latest features to help brands connect with customers in a busy year for live sports.

A look at the latest features to help brands connect with customers in a busy year for live sports.Google's AI advisors: agentic tools to drive impact and insights

12 November 2025 @ 5:00 pm

An overview of Ads Advisor and Analytics Advisor, two tools coming to English-language accounts this December.

An overview of Ads Advisor and Analytics Advisor, two tools coming to English-language accounts this December.ShowMeDo.com

Learning Python, Linux, Java, Ruby and more with Videos, Tutorials and Screencasts